Background

Recall that the goal of the 605 course is to not only improve critical appraisal skills, but also to think about research questions, designs, and the necessary compromises that are often required in research. Hopefully this adds another dimension to typical journal clubs where either an article in uncritically endorsed enthusiastically or trashed unmercifully, although this is sometimes quite merited

This week’s selected article was the 2023 publication Breaking Up Prolonged Sitting to Improve Cardiometabolic Risk: Dose–Response Analysis of a Randomized Crossover Trial concluding

The present study provides important information concerning efficacious sedentary break doses. Higher-frequency and longer-duration breaks (every 30 min for 5 min) should be considered when targeting glycemic responses, whereas lower doses may be sufficient for BP lowering.

In this paper, of 25 participants who attended a screening visit, 18 were randomized and 11 completed the randomized crossover designed study which investigated 5 different strategies to examine the acute effects of multiple doses of a light-intensity walking-based sedentary break intervention on cardiometabolic risk factors among middle- and older-age adults. The trial conditions consisted of one uninterrupted sedentary (control) condition and four acute (experimental) conditions that entailed different sedentary break frequency/duration combinations: (1) light-intensity walking every 30 min for 1min, (2) light-intensity walking every 30 min for 5 min, (3) light-intensity walking every 60 min for 1 min, and (4) light-intensity walking every 60 min for 5 min. As the largest response was for glucose differences, will restrict this commentary to that outcome.

Before applying any reanalyzing of their data, let’s just stop for a moment and ask ourselves the following question

How likely do we think a study with only 11 individuals can detect meaningful glucose difference in glucose measurements with these anti sedentary strategies? Would one expect the differences to be so large that they could be detected by this small a sample size?

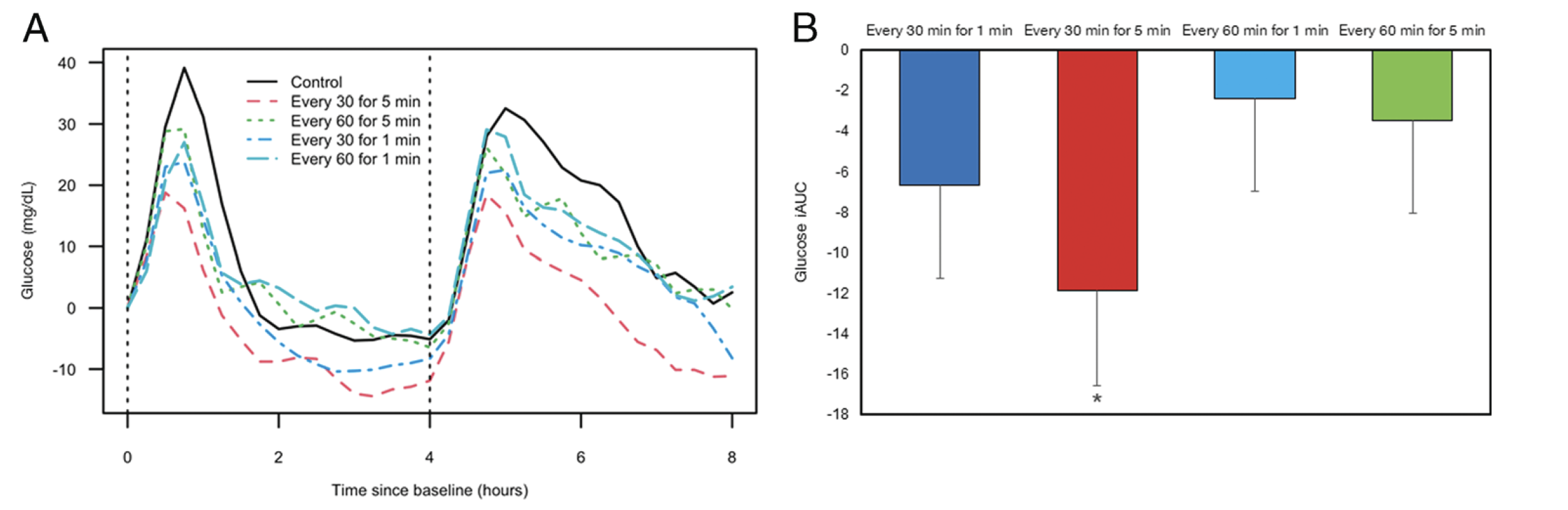

In any case, their conclusion appears supported by their published Figure 1 as shown here

This Figure is remarkable for 2 main points

1. The early separation between the control and intervention groups which appears maximum for 5 minutes exercise every 30 minutes

2. The outcome is not the glucose level from each randomized but the difference in level compared to the control (baseline) group.

The early outcome difference

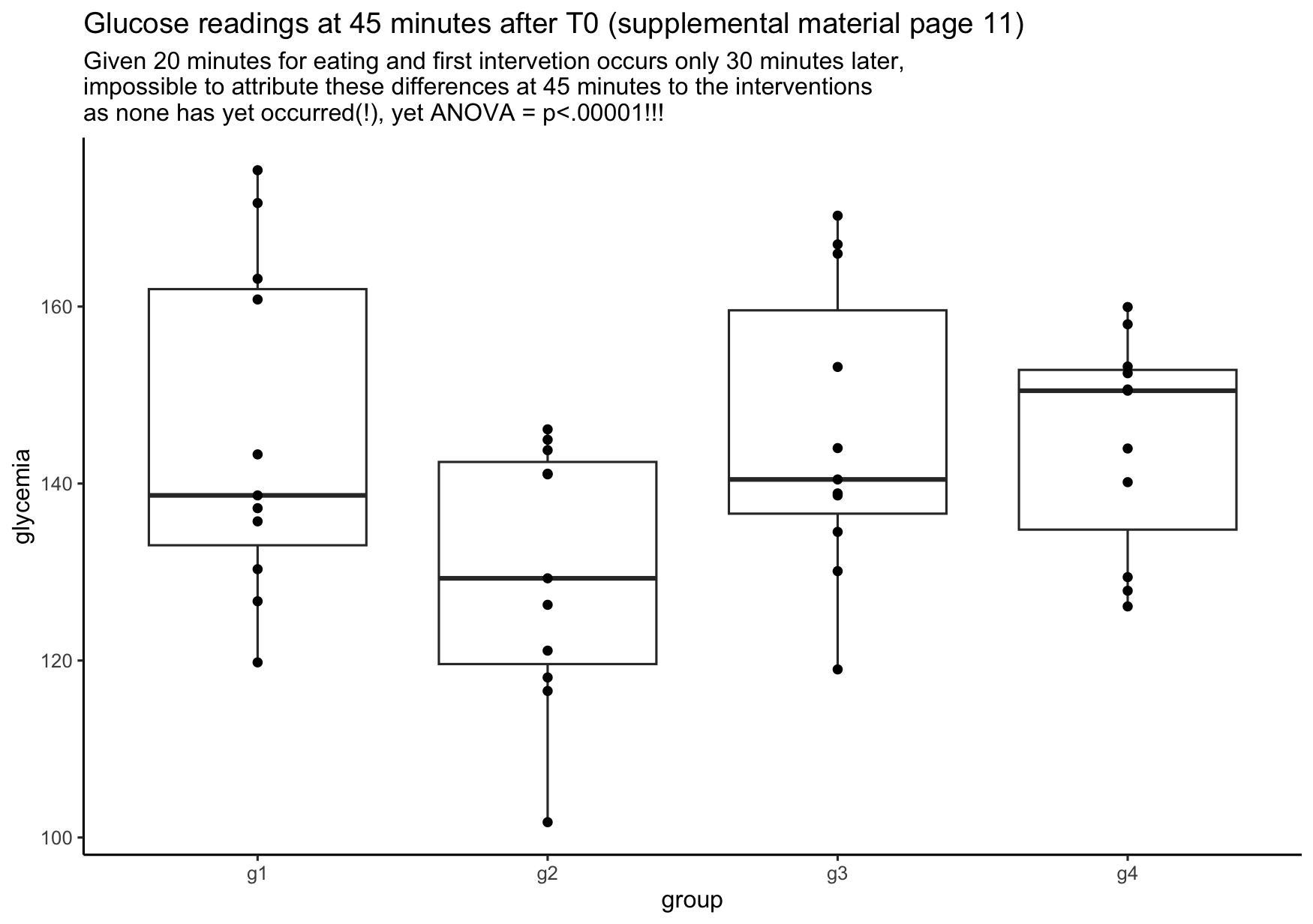

In the supplemental material, the authors report summary glucose levels for each group at 15 minute intervals. Using these values, we may simulate the glucose measurements for each group. Let’s consider the values at 45 minutes after T0. Given the authors state that the first 20 minutes are assigned to a standardized meal and that no internvetion occurs before 30 minutes, any variation in the 45 minutes values can’t be due to the intervention. Yet, plotting an analyzing this data (ANOVA) reveals the following

[1] "Analysis of variance using outcome of group glucose - control glucose" Df Sum Sq Mean Sq F value Pr(>F)

group 3 1953 651 10 0.000046

Residuals 40 2594 65 Finding these large differences even before any of the interventions could take effect should be a red flag for reservations about the final conclusions.

The outcome measure

Using the outcome measure as the difference in glucose levels between the active treatments strategies and the control (baseline) group is a potentially fatal flaw. The fatal flaw is using the difference from baseline as their outcome measure. Bland and Altman have published about why this “is biased and invalid, producing conclusions which are, potentially, highly misleading. The actual alpha level of this procedure can be as high as 0.50 for two groups and 0.75 for three”. In short, we need to remember what is being randomized, it is the assignment to a given treatment strategy. Individuals are not randomized to their baseline glucose levels, any more than they are randomized to their weights, heights, eye color, or any other characteristic. With small sample sizes we may well expect that even in randomized samples, there may be meaningful differences in these characteristics, therefore including them as a component of the outcome is not appropriate and may bias the results.

Harrell lists all the many assumptions required to be met before analyzing change from baseline could (potentially) be used.

i. the variable is not used as an inclusion/exclusion criterion for the study, otherwise regression to the mean will be strong

ii. if the variable is used to select patients for the study, a second post-enrollment baseline is measured and this baseline is the one used for all subsequent analysis

iii. the post value must be linearly related to the pre value

iv. the variable must be perfectly transformed so that subtraction “works” and the result is not baseline-dependent

v. the variable must not have floor and ceiling effects

vi. the variable must have a smooth distribution

vii. the slope of the pre value vs. the follow-up measurement must be close to 1.0 when both variables are properly transformed (using the same transformation on both)

With this in mind, this study doesn’t meet these assumptions (according to their CONSORT Fig 1, glucose was an entrance criteria, therefore possible regression to the mean may be present, glucose has a definite “floor effect”, and no proof that model is linear).

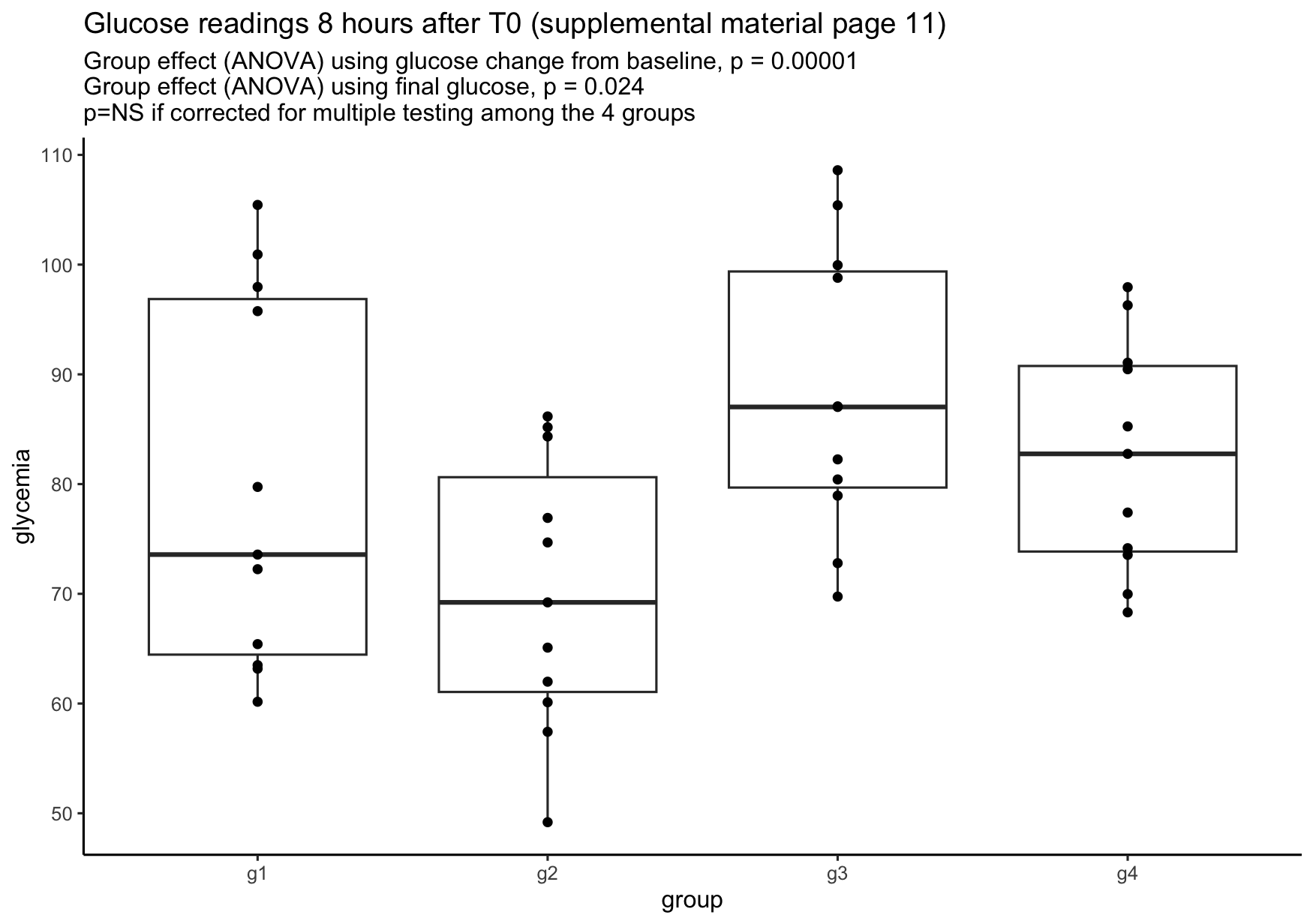

To demonstrate the bias inherent in using change from baseline as opposed to final glucose reading alone, let’s again use the simulated data. We will analyze (ANOVA) and plot the outcome according to treatment where the outcome is i) the final glucose reading in each group or ii) ii) the change from baseline for each group.

This suggests the original analysis is quite wrong and there is likely no difference between the strategies being tested. This is no surprise as with only 11 (or 9) subjects), even with a crossover design, the differences would have to be to very large to reach statistical significance. See the common sense response to the opening question above.

There are some other additional discussion points to consider.

1. While 18 subjects were randomized only 11 were analyzed and 2 of them had missing values. This raises the possibility of a non-quantifiable selection bias.

2. The authors report a post-hoc power calculation, a statistically inappropriate and nonsensical technique. If a nonsignificant finding was obtained, power will always be low to detect the observed effect size, as observed power is directly related to the obtained P value, with the former providing no additional information than the latter.

3. There is no discussion of whether these measured outcomes have any clinical relevance. Suppose against all reason, the true glucose incremental area under the curve differential for the best treatment strategy was indeed the reported -11.8 mg% over 8 hours. This translated to 1.4 mg% / hour for an 8 hour day or 0.47 mg% / hour over 24 hours. How likely would this small (i.e. trivial) a difference have any meaningful clinical effect? Important to recall the adage

“Measure what is important and don’t make important what you can measure”

Citation

@online{brophy2024,

author = {Brophy, Jay},

title = {Does It Make a Difference - Sedentary Break},

date = {2024-03-05},

url = {https://brophyj.github.io/posts/2024-02-19-my-blog-post/},

langid = {en}

}